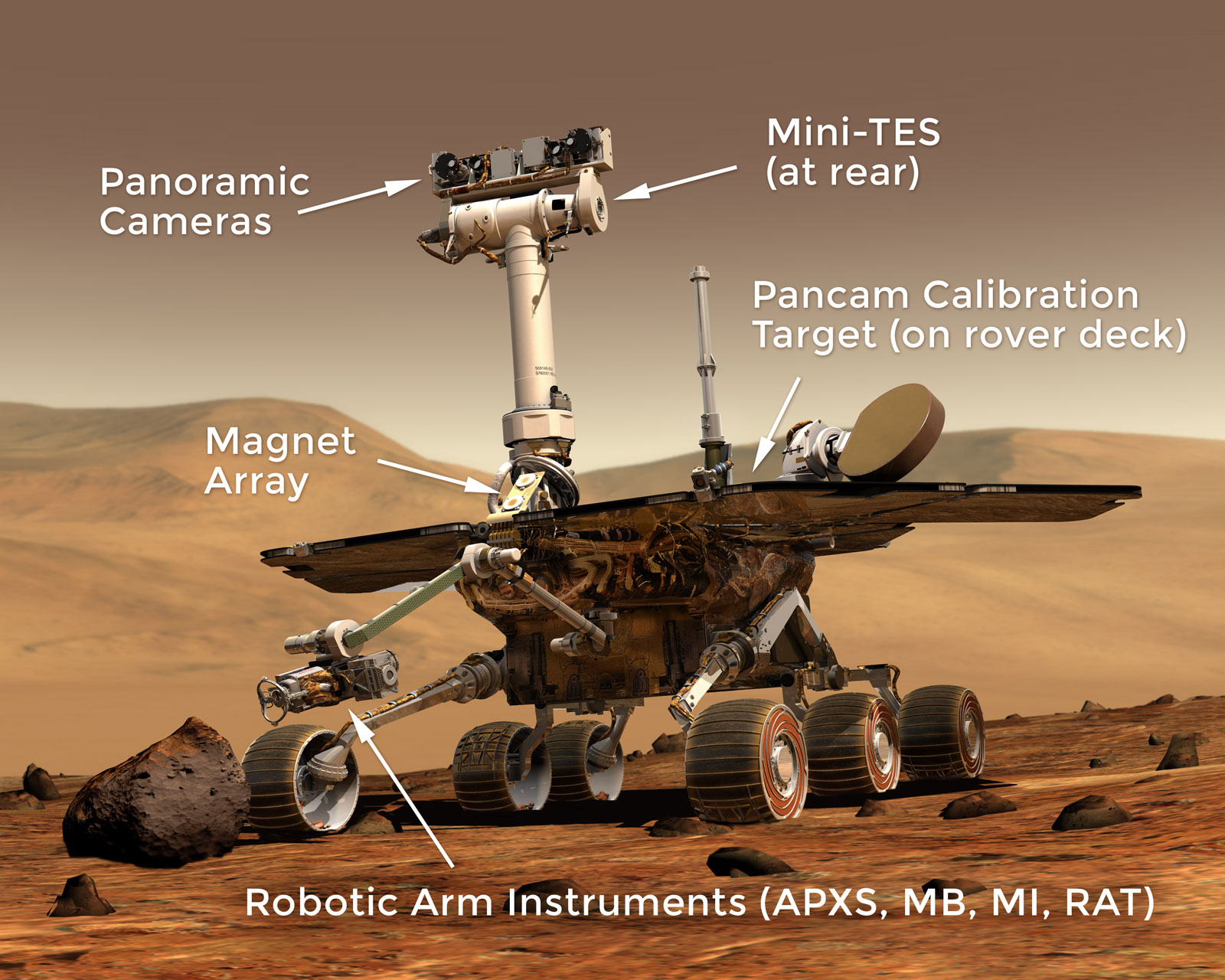

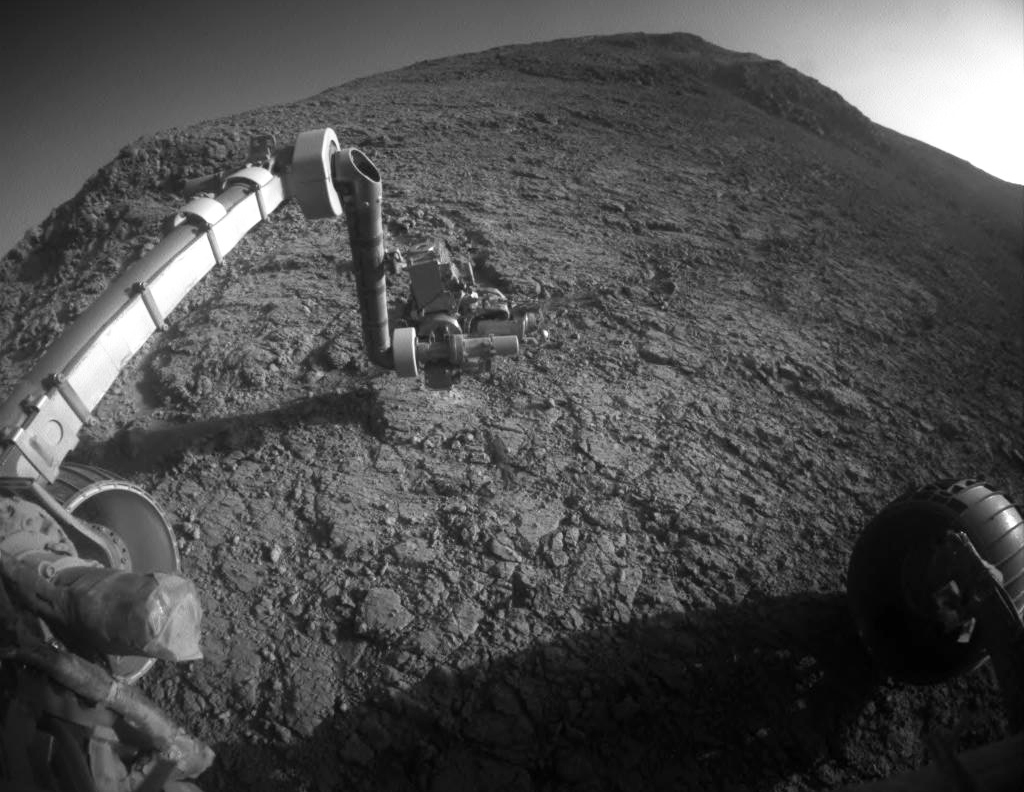

Mars Exploration Rovers

Science Instruments

Spirit and Opportunity’s science instruments are state-of-the-art tools for acquiring information about Martian geology, atmosphere, environmental conditions, and potential biosignatures.

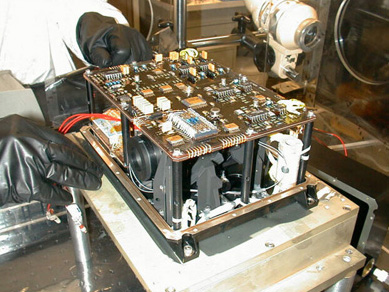

Cameras

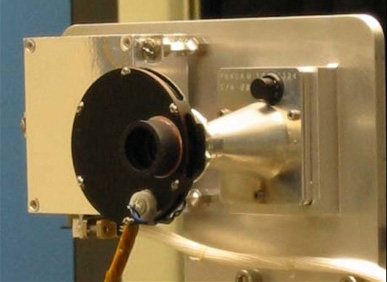

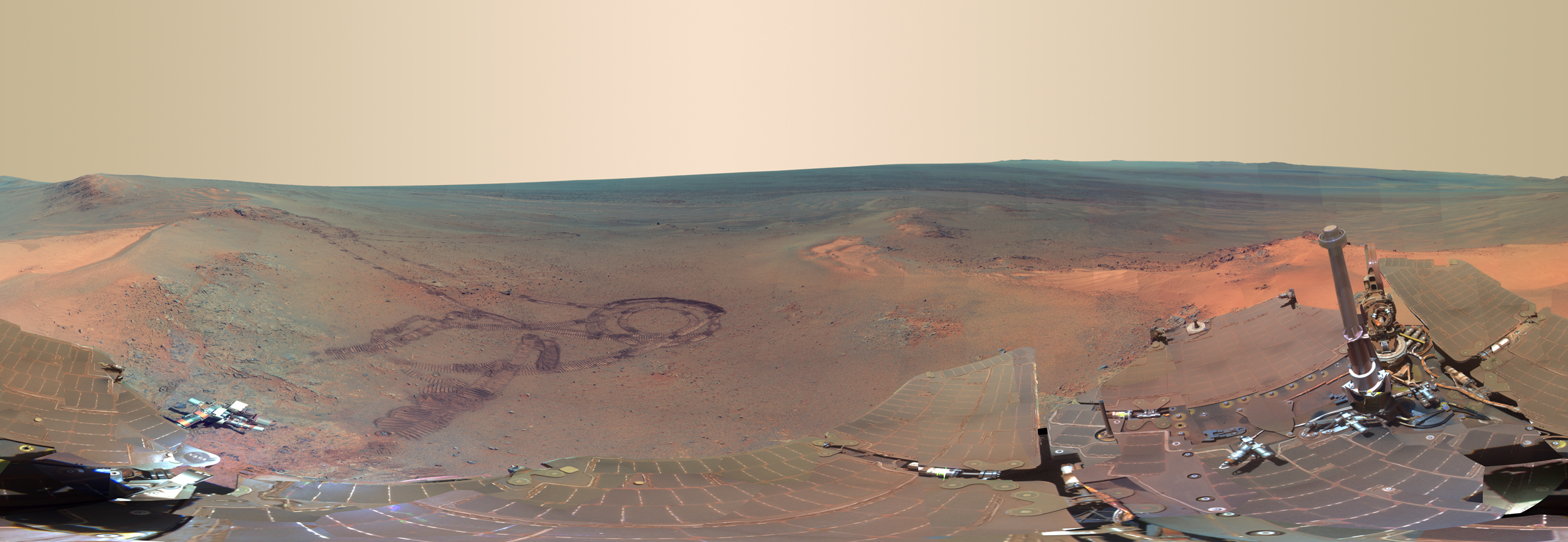

Pancam

The Panoramic Camera is known by its nickname, Pancam. Two cameras worked in combination to take detailed, multi-wavelength, 3-D panoramic pictures of the Martian landscape surrounding the rover. The rovers’ Pancam Mast Assembly stands from the base of the rover wheel 1.4 meters tall (about 5 feet). This height gives the cameras a special "human geologist´s" perspective and wide field of view. The pancam mast assembly acted as a periscope for the Mini-TES science instrument that is housed inside the rover body for thermal reasons and provided a better point of view for the Pancams and the Navcams.

Tech Specs

Main Job | To take panoramic color images of the Martian surface, the sun, and the sky. |

Location | Mounted on the rover mast at average human-eyesight level, about 5 feet (1.5 meters), with about 11.8 inches (30 centimeters) between them. |

Mass | ~9.5 ounces (270 grams) each |

Power | 3 Watts for the CCD and electronics; 3.5 Watts for a small warmup heater. |

Size | Small enough to fit in the palm of your hand. |

Color Quality | 8 filters per eye cover 400-1100 nm (near-UV to near-IR), allowing full-color images and spectral analysis of minerals and the atmosphere. |

Image Size | 1024 x 1024 pixels (equivalent to 20/20 human vision). |

Image Resolution | ~0.04 inch (1 millimeter) per pixel at a distance of 9.8 feet (3 meters) from the rover. |

Focal Length | ~1.5 inches (39 millimeters), with optimal focus from 5 feet (1.5 meters) to infinity. |

Focal Ratio and Field of View | f/20, yielding an IFOV of 0.27 mrad per pixel and a FOV of 16.8 degrees x 16.8 degrees. |

Stereo Separation | ~11.8 inches (30 centimeters) between the two cameras. |

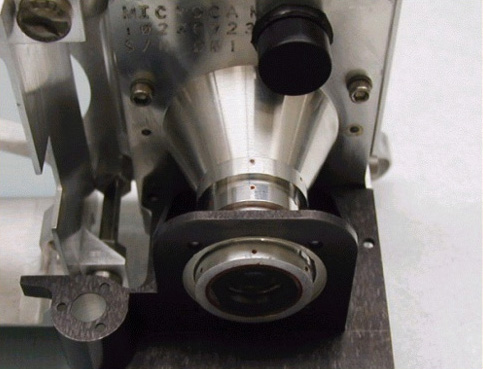

Microscopic Imager

1.91 inches (48.6 millimeters) high, 2 inches (51 millimeters) long and 1.61 inches (41 millimeters) wide.

Tech Specs

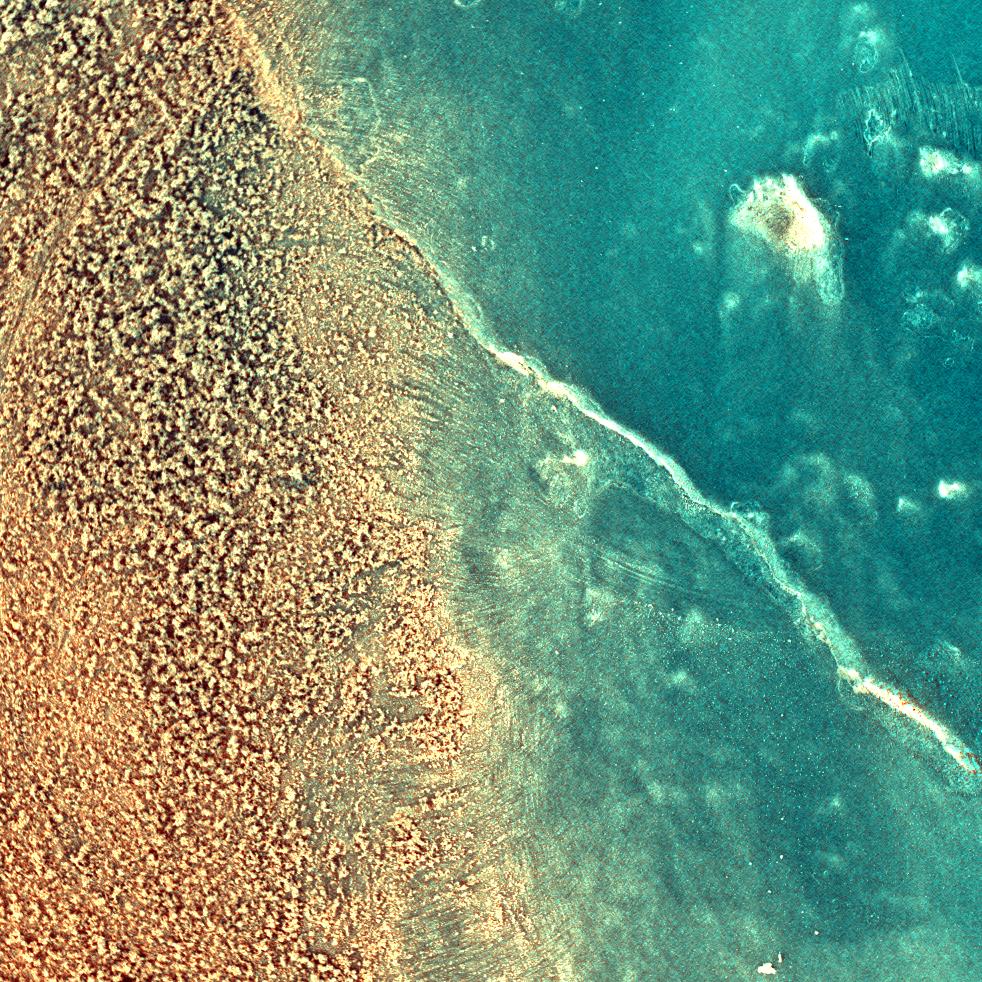

Main Job | To provide extreme close-up black-and-white views of rocks and soils, providing context for the interpretation of data about minerals and elements. |

Location | Mounted on the turret at the end of the robotic arm. |

Mass | ~7.5 ounces (210 grams); 10.2 ounces (290 grams) including the dust cover and contact sensor |

Power | 2.5-4.3 Watts for camera; 0.5 Watt for dust cover open or close. |

Size | 1.91 inches (48.6 millimeters high), 2 inches (51 millimeters) long and 1.61 inches (41 millimeters) wide. |

Color Quality | Single broad-band filter, so imaging is monochromatic (black and white) |

Image Size | 1024 x 1024 pixels |

Image Resolution | 31 microns per pixel (0.001 inch or 0.031 millimeter per pixel). Able to resolve 0.004-inch (0.1 millimeter) objects/features. |

Focal Length | 0.8 inch (21 millimeters), with optimal focus of 2.67 inches (68 millimeters) |

Focal Ratio and Field of View | f/15, yielding an IFOV of 30 +/- 1.5 micrometers per pixel on-axis |

Spectrometers

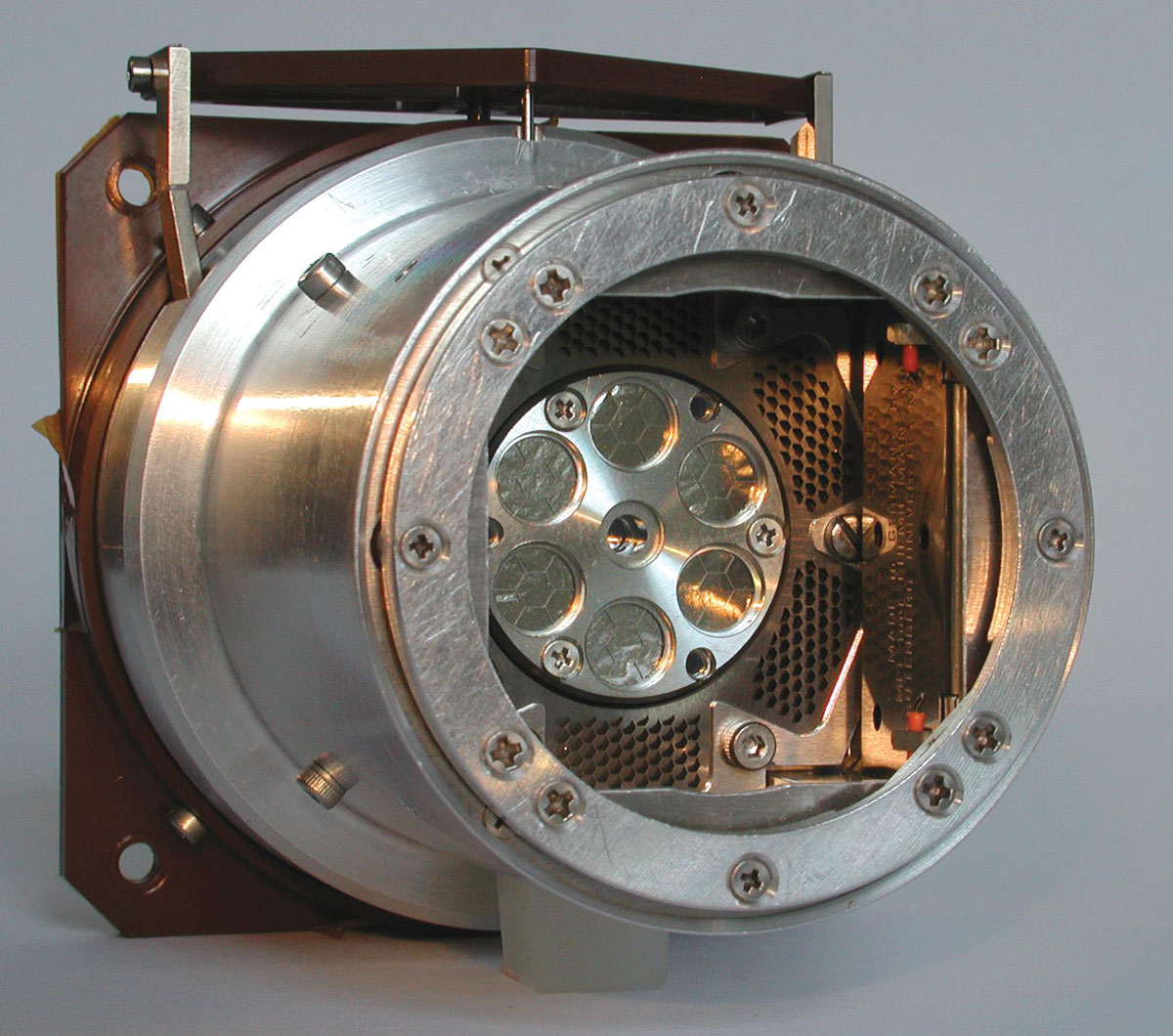

Miniature Thermal Emission Spectrometer

The Miniature Thermal Emission Spectrometer is called Mini-TES for short. Mini-TES measures the different spectrums of infrared light, or heat, emitted from different minerals in rocks and soils. Mini-TES is specially tuned to look for minerals formed in water.

Tech Specs

Main Job | To determine the mineralogy of rocks and soils from a distance by detecting their patterns of thermal radiation. |

Location | Mostly inside the rover's body. The rover's camera mast doubled as a periscope for Mini-TES. |

Mass | ~5.3 pounds (2.4 kilograms) |

Power | 5.6 Watts (operating); 0.3 Watt (daily average) |

Size | 9.25 x 6.4 x 6.1 inches (23.5 x 16.3 x 15.5 centimeters) |

Specrtral Resolution | Covered the spectral range from 5 to 29.5 &µm(1997.06 to 339.50 centimeters-1) with a spectral sampling of 9.99 centimeters-1 |

Focal Ratio and Field of View | f/12; 8 and 20 mrad |

Mössbauer Spectrometer

The Mössbauer Spectrometer on the Mars Exploration Rovers, Spirit and Opportunity, is known as MB. The MB determines the makeup and quantities of iron-bearing minerals in geological samples studied by the rover. MB can be placed right up to rock and soil samples for close-up study, and it also examines magnetic dust samples collected by the Magnetic Array on the rover's deck.

Tech Specs

Main Job | To identify iron-bearing minerals, yielding information about early Martian environmental conditions. |

Location | Attached to the turret at the end of the rover arm |

Size | Small enough to hold in your hand |

Size of Sampled Area Quality | .59 inch (15 millimeters) up to 0.79 inch (20 millimeters) in diameter on the surface of the sample, depending on actual sample distance and shape. |

Data Acquisition | One Mössbauer measurement takes about 12 hours. |

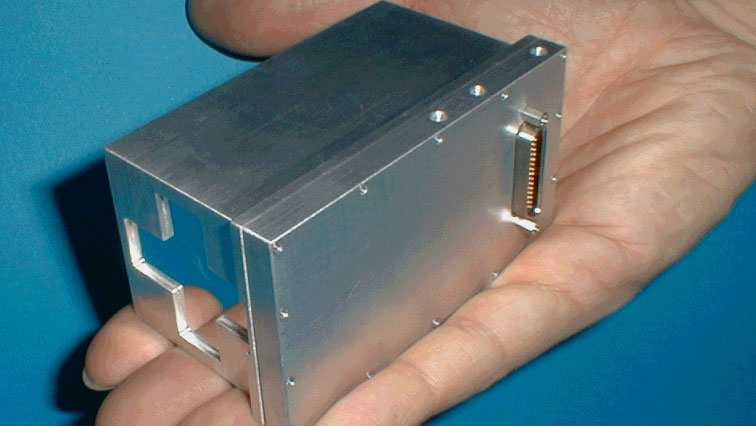

Alpha Particle X-Ray Spectrometer

The Alpha Particle X-Ray Spectrometer on the Mars Exploration Rovers, Spirit and Opportunity, is also called the APXS. The APXS reveals the elemental chemistry of rocks and soils by measuring the distinctive way differentdifference materials respond to two kinds of radiation: X-rays and alpha particles.

Tech Specs

Main Job | To determine the elements that make up rocks and soils, providing information about crustal formation, weathering processes, and water activity on Mars. |

Location | Attached to the turret at the end of the rover arm |

Size | Small enough to hold in your hand (3.3 inches x ~2 inches, or 84 millimeters x 52 millimeters) |

Size of Sampled Area Quality | ~1.5 inches (38 millimeters) |

Data Acquisition | Most APXS measurements were taken at night and required at least 10 hours of accumulation time. |

Rock Abrasion Tool

The Rock Abrasion Tool on the Mars Exploration Rovers, Spirit and Opportunity, is known as the RAT. The RAT's rotating, grinding teeth gnaw into the surface of Martian rock to reveal fresh mineral surfaces for analysis by the rover's scientific tools.

Tech Specs

Main Job | To use a grinding wheel to remove dust and weathered rock, exposing fresh rock underneath. |

Location | Attached to the turret at the end of the rover arm |

Mass | Less than 720 grams |

Weight | About 1.6 pounds on Earth, or 0.6 pounds on Mars |

Size of Exposed Area | Able to create a hole ~2 inches (45 millimeters) in diameter and 0.2 inch (5 millimeters) deep into a rock on the Martian surface. |

Grinding Speed | The RAT was able to grind through hard volcanic rock in about two hours. |

Magnet Array

The Magnet Array was a scientific experiment that collected dust on the Mars Exploration Rovers, Spirit and Opportunity. Magnetic grains in Martian dust are tiny pieces of the Red Planet's past. The Magnetic Array collected the dust for analysis by scientific tools that identified the composition and presented clues on the history of the dust particles.

Main Job | To collect airborne dust for analysis by the science instruments. |

Location | Seven magnets on each rover: Four magnets wereare carried by the Rock Abrasion Tool (RAT); two magnets (one capture magnet and one filter magnet) wereare mounted on the front of the rover; another magnet (sweep magnet) wasis mounted on the top of the rover deck in view of the Pancam. |

Size | The RAT carried four magnets 0.27 inch (7 millimeters) in diameter and 0.35 inch (9 millimeters) thick. The two magnets on the front of the rover were each ~1 inch (25 millimeters) in diameter. The magnet on the rover deck was 0.35 inch (9 millimeters) in diameter. |

Magnet Purposes | Capture Magnet: Designed to be as strong as possible to make it capture any magnetic particle within range. Filter Magnet: Designed to capture primarily the most magnetic particles. Sweep Magnet: Designed in a way that only allows non-magnetic particles to settle in the center of the magnet. RAT Magnets: Designed to detect magnetic minerals in the rock material ejected from the grinding process. |

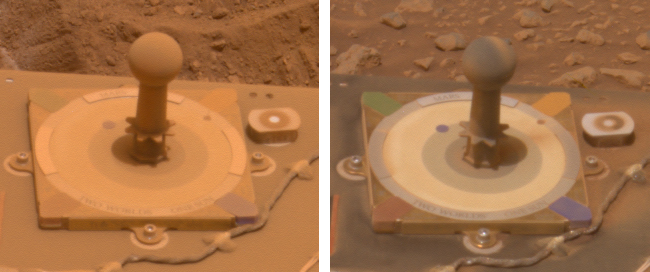

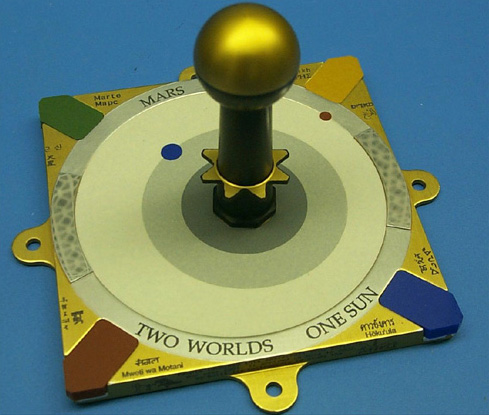

Calibration Targets

The instruments used calibration targets, including a sundial, to determine accurate colors, brightnesses, and other information collected by the instruments.

Main Job | Objects with known properties that acted as reference points to help scientists fine-tune observations not only from imagers but also other science instruments. |

Location | Pancam calibration target was in the shape of a sundial mounted on the rover deck. Mössbauer Spectrometer calibration target was a thin slab of rock rich in magnetite mounted under the rover solar panels (it could also be used by the APXS). APXS calibration target was on the inside of its dust doors. Mini-TES had an internal target located in the Pancam Mast Assembly, as well as an external target on the deck of the rover near the low-gain antenna. |

Function | The colored blocks in the corners were used to calibrate the color in images of the Martian landscape. Pictures of the shadows cast by the sundial's center post allowed scientists to properly adjust the brightness of each Pancam image. Measurements of the calibration targets of the Mössbauer, APXS, and Mini-TES helped verify that the instruments were working properly. |